Human-in-the-Loop Document Migration

How corrections become training data in aviation compliance systems

How corrections become training data in aviation compliance systems

TrustFlight

2023–2024

Lead Product Designer, Front-End Development

Every airline operates on hundreds of pages of critical documentation: flight crew operating manuals, maintenance procedures, compliance handbooks, navigational charts. These documents form the backbone of safe flight operations. For decades, they existed as paper manuals weighing up to 40 pounds per pilot, literally carried in flight bags to the cockpit.

The industry has been moving these documents to Electronic Flight Bags (EFBs), tablets and devices that replaced the heavy briefcases pilots once lugged onto every flight. The transition involved converting existing documentation to standardized digital formats, primarily PDF, for long-term readability and regulatory compliance.

But here's the problem: despite operators implementing modern maintenance IT systems, documents still circulate primarily as PDFs when moving between systems or adopting new platforms. To adopt our authoring platform, these PDFs need conversion to structured, editable content, not just digitized, but truly structured. Every regulation number, every nested procedure, every compliance table must survive the conversion intact.

This is harder than it sounds, because PDFs were never designed to hold meaning.

A PDF doesn't say "this is a heading" or "this is a nested table." It says "draw this text at coordinates X,Y in 14pt bold." What looks like a table is just lines and text positioned to look like one. What looks like a hierarchy is just indented text. There's no data model, only geometry pretending to be structure.

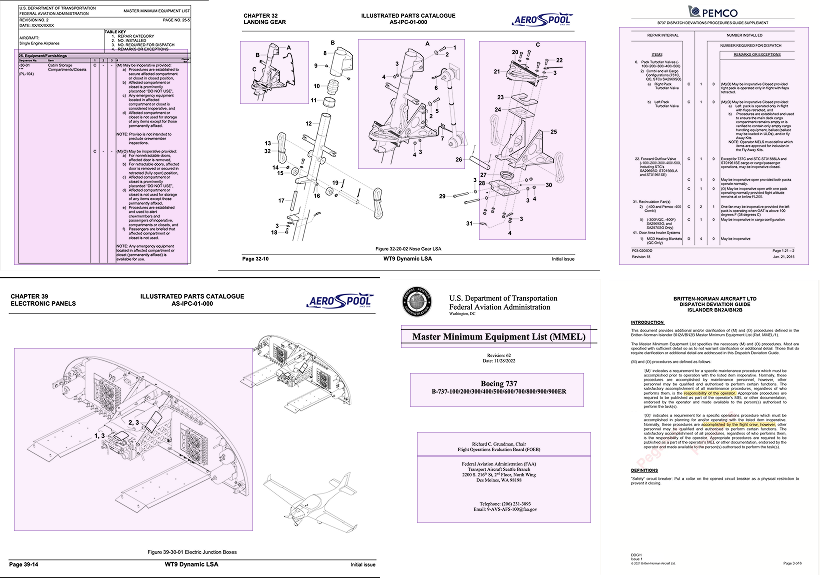

Examples of nested tables, regulatory numbering, and inconsistent formatting across aviation documents. These visual ambiguities demonstrate why AI struggles with reliable structure extraction.

When structure fails, everything downstream breaks. Generic PDF-to-Word conversion mangles numbering, flattens nested tables, and scrambles cross-references. The result isn't editable content, it's formatting wreckage.

Manual retyping is impossible for 300-page compliance documents where every misplaced decimal can trigger an audit finding. Outsourced conversion services are expensive and slow. Pure AI extraction isn't much better because advanced models struggle most where accuracy matters most.

Regulatory numbering becomes ambiguous. Deeply nested hierarchies like CAR OPS 1.1.1.1.1 must remain intact for compliance traceability, but the AI has to distinguish section numbers from procedural steps from list items. Context humans infer instantly but file formats never encode.

Tables collapse under nesting. Aviation manuals commonly nest tables four levels deep with merged cells, multi-column spans, and color-coded indicators that carry regulatory meaning. Generic extraction tools see only boxes and text, not the relationships between them.

Formatting provides inconsistent signals. These documents evolve over a decade with different authors using different conventions. A supposed heading might share the same font size as emphasized body text. Operator-specific patterns vary by airline, document type, and sometimes by chapter.

The alternative to generic conversion tools isn't pure manual work or hoping AI gets it right. It's a system that learns from operator-specific patterns through targeted human correction.

When an operator uploads their first manual, the system attempts automated extraction but flags ambiguities: is this nested numbering or a procedural step? Is this a merged table cell or separate fields? Human reviewers resolve these flags, and their corrections become training data. The next time the system encounters similar patterns in that operator's documents, it applies the learned logic automatically.

The goal isn't fixing every error manually. It's teaching the system each operator's unique document logic so subsequent conversions require minimal intervention. You can't anticipate every edge case, but you can build a system that learns them.

This is creating a smartdoc by importing a PDF and converting it to smart doc.

The extraction system doesn't just read PDFs, it interprets them using aviation-specific rules. It recognizes that "1.1(a)" and "1.1.1" aren't interchangeable in regulatory language. It preserves nested tables and merged cells because procedural hierarchy matters. It strips headers and footers to isolate actual content, treats "CAR OPS 1.1.1.1" as one procedure rather than four nested lists, and outputs structured data that maps directly to our editor's schema.

The system analyzes where content sits on the page and what that content actually means. A number followed by indented text isn't just typography, it's a nested procedure. A grid of lines isn't decoration, it's a regulatory table. This spatial and semantic analysis identifies headers, tables, diagrams, and regulatory patterns with precision tuned for aviation documentation.

This approach has a fundamental constraint. PDFs encode presentation, not structure. With ten years of different authors using different conventions, the AI can't reliably tell which 14-point bold text is a header and which is just emphasis. Visual spacing needs deterministic rules, but every operator breaks those rules differently. No static ruleset can capture every variation. The AI provides a strong baseline, not perfection. Human review fills the gap.

Human review drives the system's accuracy. The AI makes predictions, and operators verify and correct them through direct manipulation. For example, the system identifies where headers and footers end and actual content begins, then operators adjust these boundaries by dragging lines in the interface. Make the correction once and it applies across hundreds of pages instantly.

The interface matters because it determines how efficiently operators can provide input. Early versions asked for text descriptions, which was slow and imprecise. Visual input captures exact coordinates and what used to take 20 minutes of back-and-forth now takes two minutes. A 300-page document gets boundary corrections in under five minutes.

[Video: operator dragging boundaries in a PDF preview, correcting multiple pages at once. A 300-page document gets fixed in under five minutes.]

Once boundaries are set, the system extracts structured content and operators review it in split-screen: original PDF on the left, structured output on the right, scrolling in sync. Detection and correction are separate tasks. Operators focus purely on identifying problems by scanning the original, spotting discrepancies, and describing what's wrong. They type corrections like "This table should preserve nested structure" or "Numbering sequence broke at step 4" or "This is an MMEL exception table, not a checklist." Side-by-side review is three to four times faster than inline editing because it eliminates the cognitive load of fixing problems while still identifying them.

[Video: both views scroll together. A broken table appears. Misnumbered list. The operator types: "This table should preserve nested structure."]

Every correction becomes structured training data. The system takes natural language feedback and uses it as guidance for future documents. Learned patterns persist for each operator and evolve with every upload. After two or three reviewed documents, accuracy jumps noticeably because the system starts recognizing each operator's specific documentation style.

The loop captures what no static model could anticipate: custom table formats, operator-specific numbering conventions, amendment tracking patterns, cross-reference styles, and edge cases unique to specific fleets or document types. All the variations that would take months to encode manually get learned automatically through operator corrections. Where outsourced conversion services take weeks and cost thousands per document, this pipeline processes a 300-page manual in under an hour of operator time. The first document requires the most review. By the third, the system handles 80-90% of structure correctly without intervention.

[Video: Document 1 gets corrections. Document 2 processes more accurately. Improvement metrics climb across iterations.]

Automation alone can't handle regulatory complexity, but automation that learns from human judgment can. Every correction becomes a rule. Every rule becomes a pattern. Every pattern becomes part of the model. The pipeline refines itself, one operator and one document at a time.

Migration timeline

Review time per doc

Structural accuracy

Migration velocity increased dramatically. What previously took several weeks per 400-600 page manual now completes in 2-4 days including human review. Operator review time dropped from 3-5 hours per document to 25-45 minutes after the system learned baseline conventions.

Structural accuracy improved significantly. After 2-3 reviewed documents, the system stabilizes around 95% structural accuracy, with most remaining corrections focused on formatting refinement rather than content extraction errors.

Adoption shifted from impossible to viable. The platform went from "you'll need to reauthor everything" to "we'll convert your existing docs, you review the output." Once operators saw their own content accurately reconstructed, confidence followed.

Formatting remains the most complex challenge. Each document type brings its own spacing, indentation, and visual conventions. Instead of chasing a single universal solution, the system applies document-type-specific formatting logic and validation rules that evolve with feedback. The improvements are cumulative. Each correction sharpens the model's understanding and makes output more predictable over time.

Production taught us lessons we couldn't have learned in development. The first was clarity of roles. Early on, we asked the AI to do everything: interpret meaning, preserve formatting, infer structure, recreate layout fidelity. Production showed us where to split responsibilities. Let the AI understand what content means. Use deterministic rules to decide how it should look. Tables with merged cells need human review not because the AI failed, but because PDFs don't encode table structure. Systems infer it from positioned visual elements, and complex regulatory tables break that inference. Spacing and indentation belong in post-processing with explicit rules.

Visibility matters more than we expected. When we started showing operators how their corrections improved future conversions, their investment in correction quality increased noticeably. People engage more when they see their impact. The first document shapes everything. Operators form mental models based on that initial experience, so we learned to make first reviews thorough but transparent about what the system handles well and where it needs guidance.

Word-level accuracy is largely solved. The true challenge is formatting accuracy, deciding how extracted content becomes structured data the system can act upon. Correct structure is the foundation for every downstream feature: automatic tables of contents, regulation tracking, revision records. The system must strip away dynamic elements while keeping the structural backbone intact.

The environment is favorable. Underlying AI models continue improving rapidly. Tools for building transformation layers and post-processing pipelines are getting better. By focusing on incremental accuracy improvements and building systems that learn from corrections, we're riding alongside the technology's improvement curve. Each model update lifts the baseline. Each refinement to our learning system compounds those gains. The challenges of extraction at scale are far from solved, but being part of the effort to make it a little more reliable, and a little more understood, has been deeply worthwhile.

Conversational incident reporting with automated risk assessment for aviation operators.